Try in Colab

About artifacts

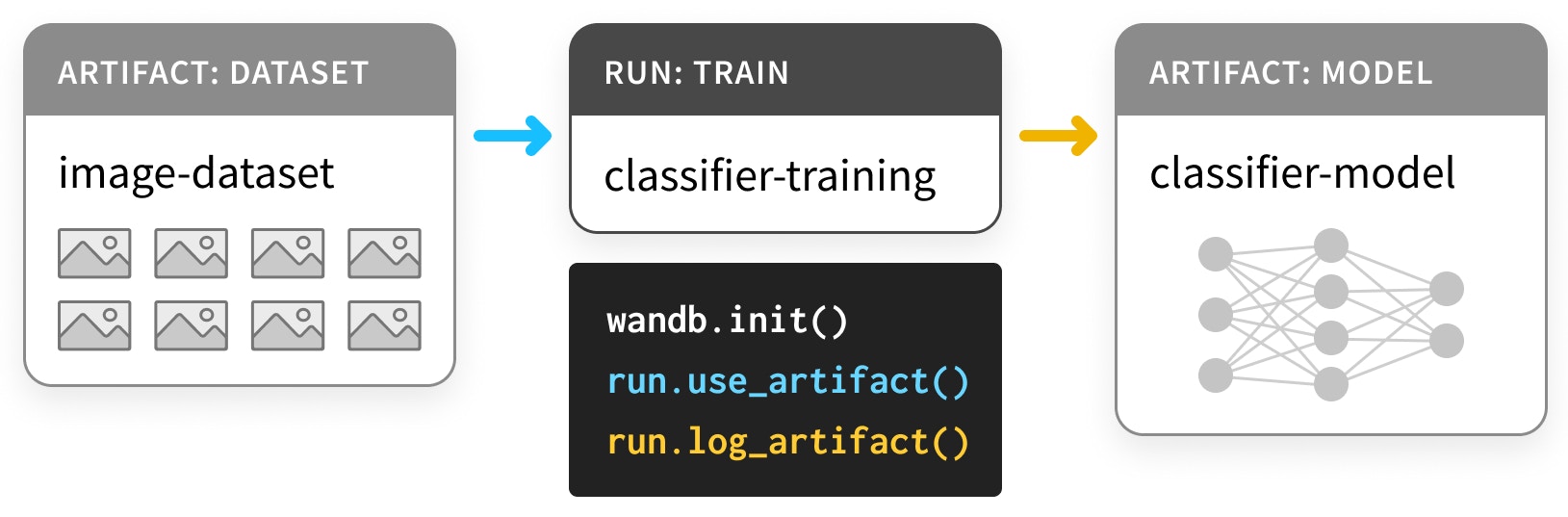

An artifact, like a Greek amphora, is a produced object — the output of a process. In ML, the most important artifacts are datasets and models. And, like the Cross of Coronado, these important artifacts belong in a museum. That is, they should be cataloged and organized so that you, your team, and the ML community at large can learn from them. After all, those who don’t track training are doomed to repeat it. Using our Artifacts API, you can logArtifacts as outputs of W&B Runs or use Artifacts as input to Runs, as in this diagram,

where a training run takes in a dataset and produces a model.

Artifacts and Runs together form a directed graph (a bipartite DAG, with nodes for Artifacts and Runs

and arrows that connect a Run to the Artifacts it consumes or produces.

Use artifacts to track models and datatsets

Install and Import

Artifacts are part of our Python library, starting with version0.9.2.

Like most parts of the ML Python stack, it’s available via pip.

Log a Dataset

First, let’s define some Artifacts. This example is based off of this PyTorch “Basic MNIST Example”, but could just as easily have been done in TensorFlow, in any other framework, or in pure Python. We start with theDatasets:

- a

training set, for choosing the parameters, - a

validationset, for choosing the hyperparameters, - a

testing set, for evaluating the final model

loading the data is

separated out from the code for load_and_logging the data.

This is good practice.

In order to log these datasets as Artifacts,

we just need to

- create a

Runwithwandb.init(), (L4) - create an

Artifactfor the dataset (L10), and - save and log the associated

files (L20, L23).

wandb.init()

When we make the Run that’s going to produce the Artifacts,

we need to state which project it belongs to.

Depending on your workflow,

a project might be as big as car-that-drives-itself

or as small as iterative-architecture-experiment-117.

Best practice: if you can, keep all of theTo help keep track of all the different kinds of jobs you might run, it’s useful to provide aRuns that shareArtifacts inside a single project. This keeps things simple, but don’t worry —Artifacts are portable across projects.

job_type when making Runs.

This keeps the graph of your Artifacts nice and tidy.

Best practice: thejob_typeshould be descriptive and correspond to a single step of your pipeline. Here, we separate outloading data frompreprocessing data.

wandb.Artifact

To log something as an Artifact, we have to first make an Artifact object.

Every Artifact has a name — that’s what the first argument sets.

Best practice: the name should be descriptive, but easy to remember and type. We like to use names that are hyphen-separated and correspond to variable names in the code.

It also has a type. Just like job_types for Runs, this is used for organizing the graph of Runs and Artifacts.

Best practice: theYou can also attach atypeshould be simple. Use something more likedatasetormodelthanmnist-data-YYYYMMDD.

description and some metadata, as a dictionary.

The metadata just needs to be serializable to JSON.

Best practice: the metadata should be as descriptive as possible.

artifact.new_file and run.log_artifact

Once we’ve made an Artifact object, we need to add files to it.

You read that right: files with an s.

Artifacts are structured like directories,

with files and sub-directories.

Best practice: whenever it makes sense to do so, split the contents

of an Artifact up into multiple files. This will help if it comes time to scale.

We use the new_file method

to simultaneously write the file and attach it to the Artifact.

Below, we’ll use the add_file method,

which separates those two steps.

Once we’ve added all of our files, we need to log_artifact to wandb.ai.

You’ll notice some URLs appeared in the output,

including one for the Run page.

That’s where you can view the results of the Run,

including any Artifacts that got logged.

We’ll see some examples that make better use of the other components of the Run page below.

Use a Logged Dataset Artifact

Artifacts in W&B, unlike artifacts in museums,

are designed to be used, not just stored.

Let’s see what that looks like.

The cell below defines a pipeline step that takes in a raw dataset

and uses it to produce a preprocessed dataset:

normalized and shaped correctly.

Notice again that we split out the meat of the code, preprocess,

from the code that interfaces with wandb.

preprocess step with wandb.Artifact logging.

Note that the example below both uses an Artifact,

which is new,

and logs it,

which is the same as the last step.

Artifacts are both the inputs and the outputs of Runs.

We use a new job_type, preprocess-data,

to make it clear that this is a different kind of job from the previous one.

steps of the preprocessing

are saved with the preprocessed_data as metadata.

If you’re trying to make your experiments reproducible,

capturing lots of metadata is a good idea.

Also, even though our dataset is a “large artifact”,

the download step is done in much less than a second.

Expand the markdown cell below for details.

run.use_artifact()

These steps are simpler. The consumer just needs to know the name of the Artifact, plus a bit more.

That “bit more” is the alias of the particular version of the Artifact you want.

By default, the last version to be uploaded is tagged latest.

Otherwise, you can pick older versions with v0/v1, etc.,

or you can provide your own aliases, like best or jit-script.

Just like Docker Hub tags,

aliases are separated from names with :,

so the Artifact we want is mnist-raw:latest.

Best practice: Keep aliases short and sweet. Use customaliases likelatestorbestwhen you want anArtifactthat satisifies some property

artifact.download

Now, you may be worrying about the download call.

If we download another copy, won’t that double the burden on memory?

Don’t worry friend. Before we actually download anything,

we check to see if the right version is available locally.

This uses the same technology that underlies torrenting and version control with git: hashing.

As Artifacts are created and logged,

a folder called artifacts in the working directory

will start to fill with sub-directories,

one for each Artifact.

Check out its contents with !tree artifacts:

The Artifacts page

Now that we’ve logged and used anArtifact,

let’s check out the Artifacts tab on the Run page.

Navigate to the Run page URL from the wandb output

and select the “Artifacts” tab from the left sidebar

(it’s the one with the database icon,

which looks like three hockey pucks stacked on top of one another).

Click a row in either the Input Artifacts table

or in the Output Artifacts table,

then check out the tabs (Overview, Metadata)

to see everything logged about the Artifact.

We particularly like the Graph View.

By default, it shows a graph

with the types of Artifacts

and the job_types of Run as the two types of nodes,

with arrows to represent consumption and production.

Log a Model

That’s enough to see how the API forArtifacts works,

but let’s follow this example through to the end of the pipeline

so we can see how Artifacts can improve your ML workflow.

This first cell here builds a DNN model in PyTorch — a really simple ConvNet.

We’ll start by just initializing the model, not training it.

That way, we can repeat the training while keeping everything else constant.

run.config

object to store all of the hyperparameters.

The dictionary version of that config object is a really useful piece of metadata, so make sure to include it.

artifact.add_file()

Instead of simultaneously writing a new_file and adding it to the Artifact,

as in the dataset logging examples,

we can also write files in one step

(here, torch.save)

and then add them to the Artifact in another.

Best practice: use new_file when you can, to prevent duplication.

Use a Logged Model Artifact

Just like we could calluse_artifact on a dataset,

we can call it on our initialized_model

to use it in another Run.

This time, let’s train the model.

For more details, check out our Colab on

instrumenting W&B with PyTorch.

Artifact-producing Runs this time.

Once the first finishes training the model,

the second will consume the trained-model Artifact

by evaluateing its performance on the test_dataset.

Also, we’ll pull out the 32 examples on which the network gets the most confused —

on which the categorical_crossentropy is highest.

This is a good way to diagnose issues with your dataset and your model.

Artifact features,

so we won’t comment on them:

we’re just useing, downloading,

and logging Artifacts.